Model hosting patterns in Amazon SageMaker, Part 4: Design patterns for serial inference on Amazon SageMaker | AWS Machine Learning Blog

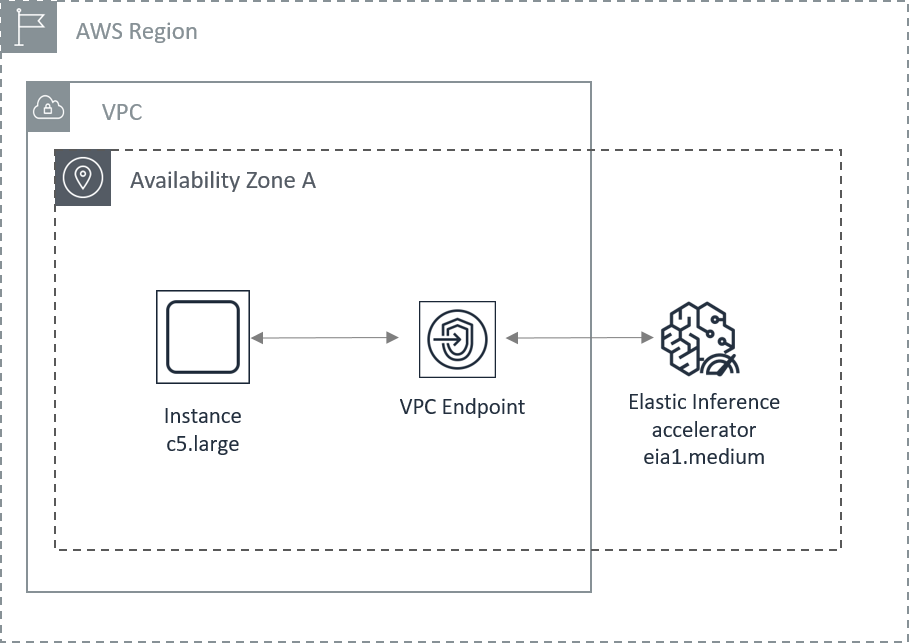

Amazon Web Services on X: "Introducing Amazon Elastic Inference: Reduce deep learning costs by up to 75% with low cost GPU-powered acceleration! #reInvent https://t.co/AY630jDINb https://t.co/cf2gBu6P9R" / X

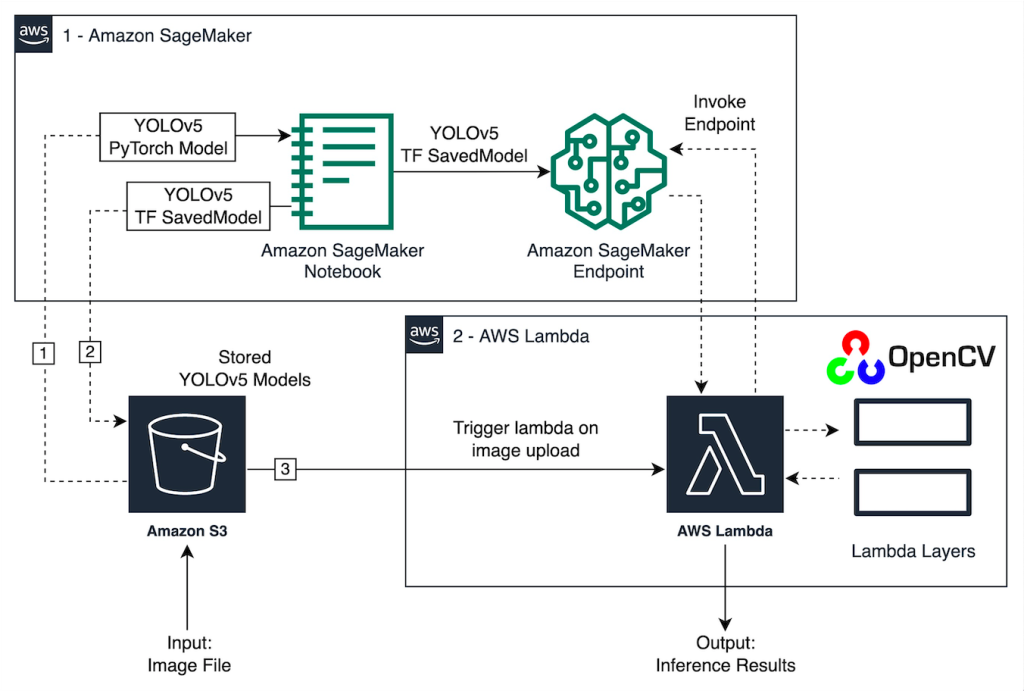

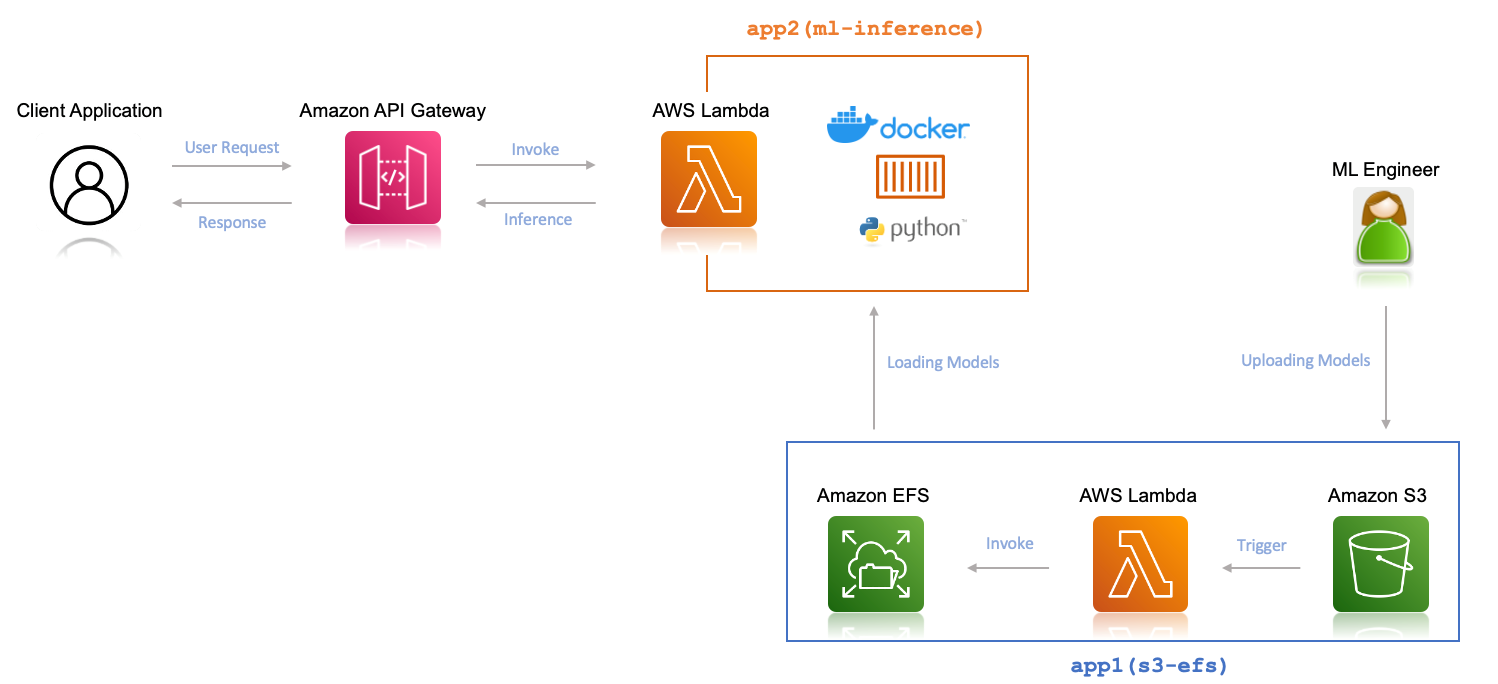

Deploy multiple machine learning models for inference on AWS Lambda and Amazon EFS | AWS Machine Learning Blog

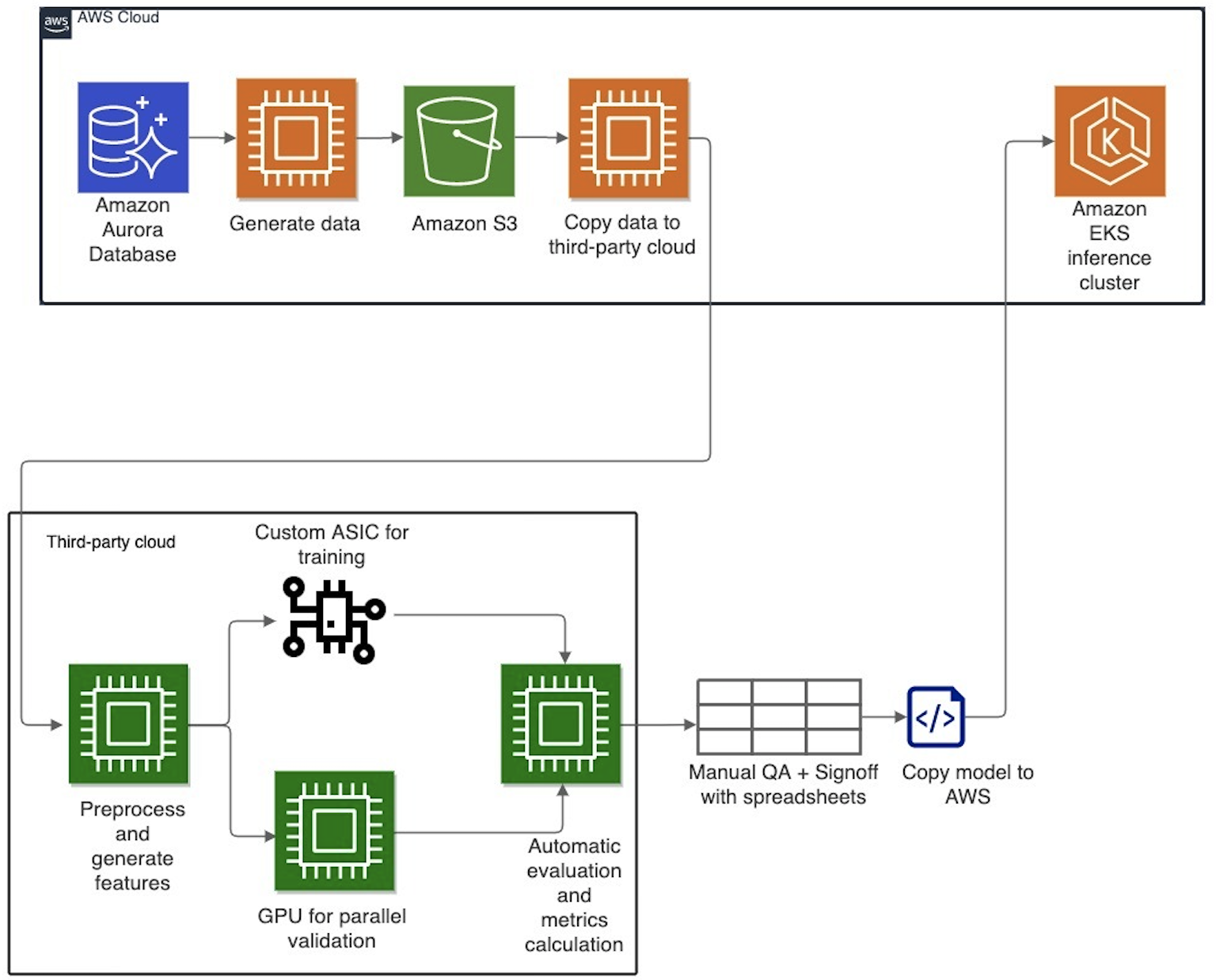

Evolution of Cresta's machine learning architecture: Migration to AWS and PyTorch | Data Integration

Maximize TensorFlow performance on Amazon SageMaker endpoints for real-time inference | AWS Machine Learning Blog

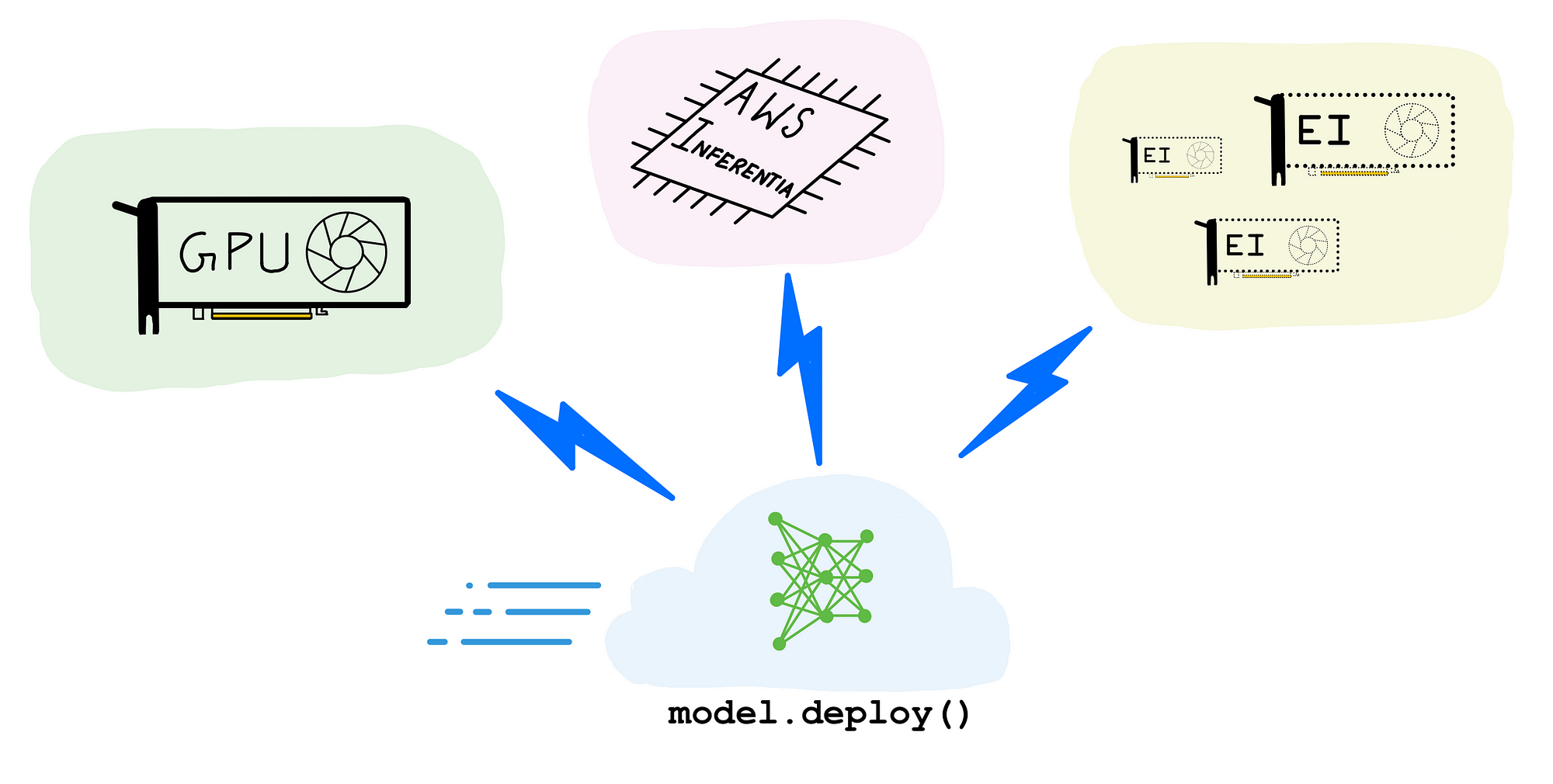

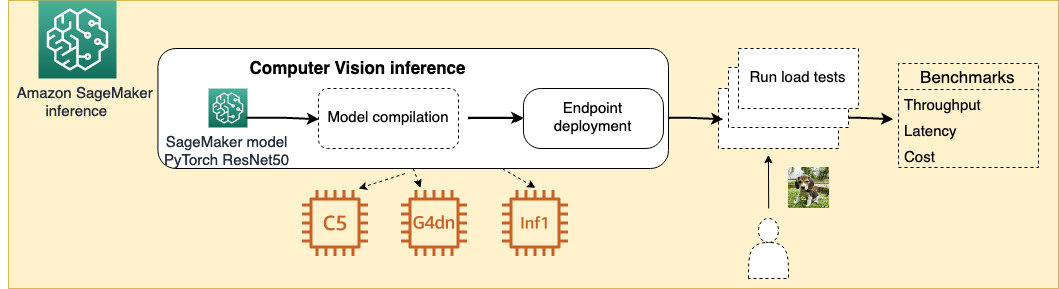

Choose the best AI accelerator and model compilation for computer vision inference with Amazon SageMaker | AWS Machine Learning Blog

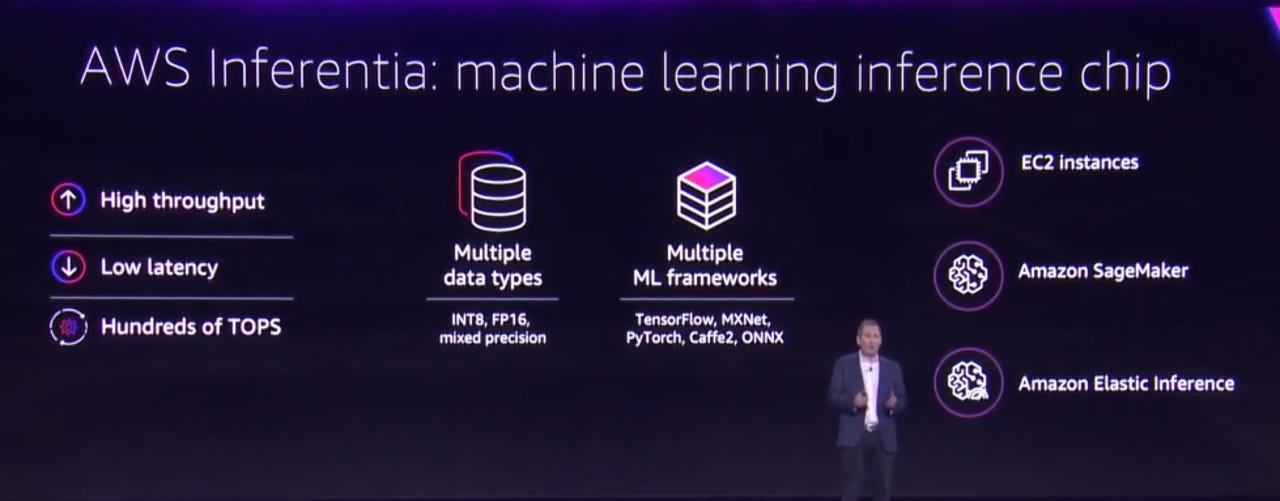

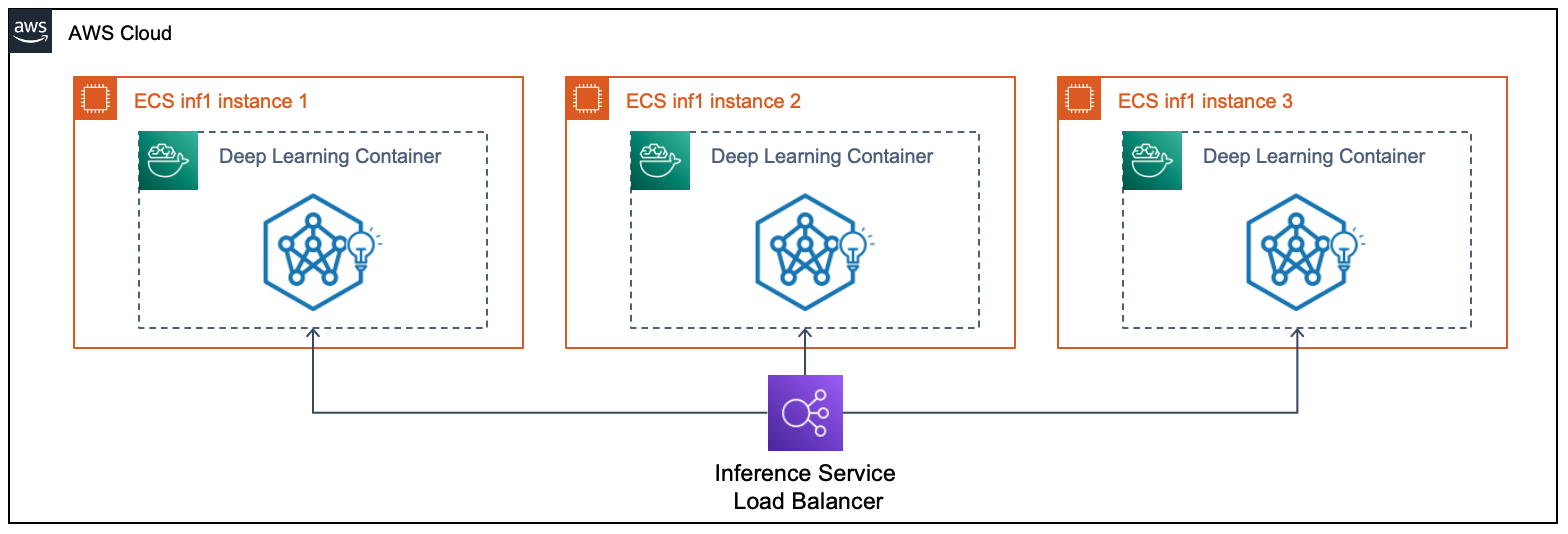

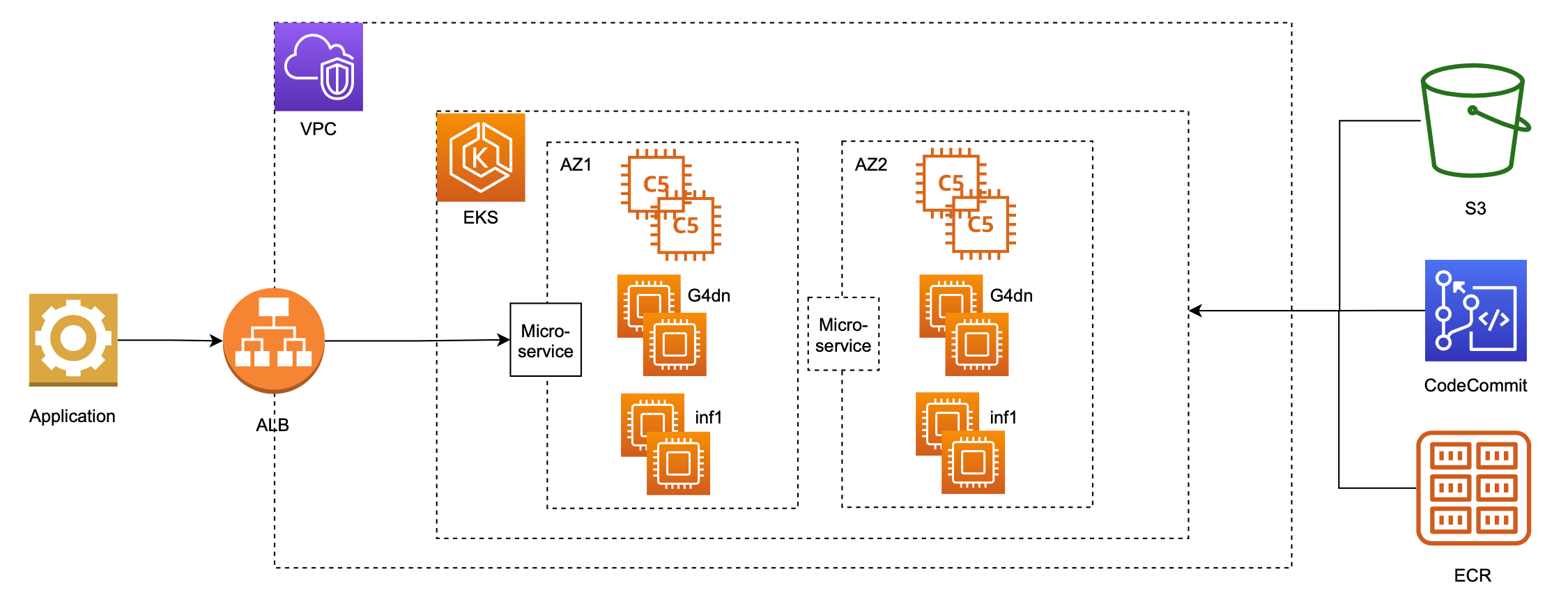

Serve 3,000 deep learning models on Amazon EKS with AWS Inferentia for under $50 an hour | AWS Machine Learning Blog